Scary AI: How Emperor AI of Han Reveals What Machines Really Are

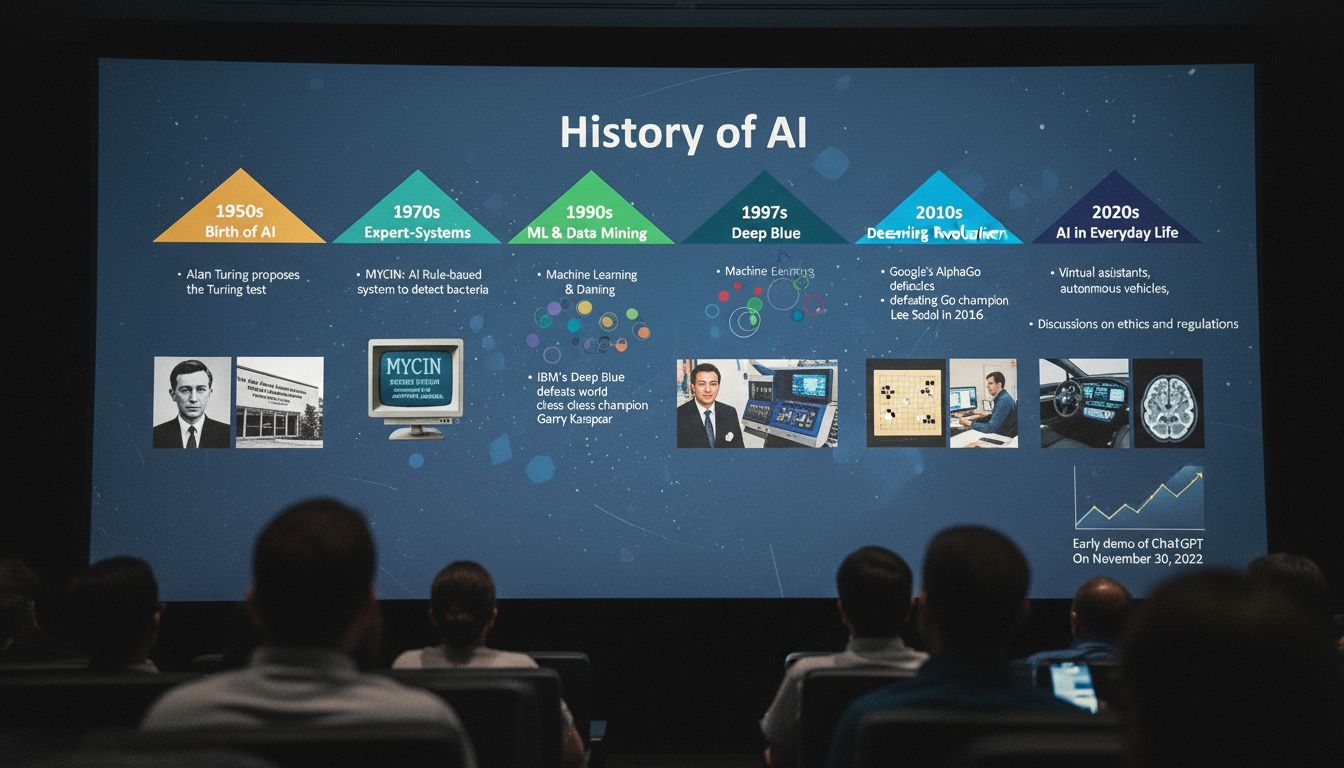

The term scary ai gets used loosely, but what actually makes artificial intelligence frightening? Part of the answer lies in history. The concept of emperor ai draws on ancient Chinese governance models, particularly the idea of a single, all-knowing authority. When people reference emperor ai of han, they are exploring what happens when machine systems gain centralized control. Meanwhile, ai chi describes the balance between human judgment and algorithmic output. Understanding ai of han as a metaphor helps us think more clearly about where AI power is heading.

AI is not inherently dangerous, but it does become concerning when it operates without human oversight. The combination of speed, scale, and opacity is what makes some AI systems feel threatening. These are real concerns worth examining carefully rather than dismissing or overhyping.

What Makes AI Scary: Autonomy, Power, and Emperor AI

The phrase scary ai often refers to systems that make decisions humans cannot easily audit. Think of hiring algorithms that reject candidates without explanation, or content moderation tools that remove posts based on opaque rules. That lack of transparency is unsettling.

The emperor ai concept frames this problem historically. In imperial China, the Han dynasty emperor held absolute authority over millions of people. No one could easily challenge his decisions. Some critics argue that powerful AI systems are developing a similar kind of unaccountable authority in modern life.

What separates useful automation from genuinely alarming AI is the question of accountability. When a system makes a consequential mistake, who answers for it? That gap between machine action and human responsibility is where much of the real concern lives.

The Legend of Emperor AI of Han: History Meets Machine Learning

The emperor ai of han metaphor became popular in tech commentary circles as a shorthand for overreach. Han dynasty emperors used vast bureaucracies to collect data on their populations, organize resources, and project power. Today’s large AI platforms do something structurally similar at digital scale.

This is not a claim that AI companies are malicious. It is a structural observation. When a single system processes billions of interactions and shapes what people see, read, and buy, the emperor ai of han comparison points at something real about concentration of influence.

The lesson from history is not that centralized systems always fail. Many Han emperors governed well. The lesson is that unchecked authority, whether human or algorithmic, needs external accountability to remain trustworthy over time.

AI Chi: Balancing Human Values and Machine Decisions

The concept of ai chi borrows from Eastern philosophy to describe a productive tension between human intuition and machine precision. Chi, in traditional Chinese thought, refers to vital energy or life force. Applied to AI, ai chi suggests that machines work best when they channel rather than override human judgment.

This is not mystical thinking. Research in human-computer interaction consistently shows that hybrid systems, where humans and algorithms share decision-making, outperform either alone. The ai chi model asks us to treat AI as a tool with energy that must be directed, not as an autonomous authority.

Practically, this means designing systems with meaningful human review at key decision points. It means giving users the ability to understand and contest automated decisions. The ai chi approach is less about fear and more about disciplined collaboration.

Risk Assessment and the Thinking Machine

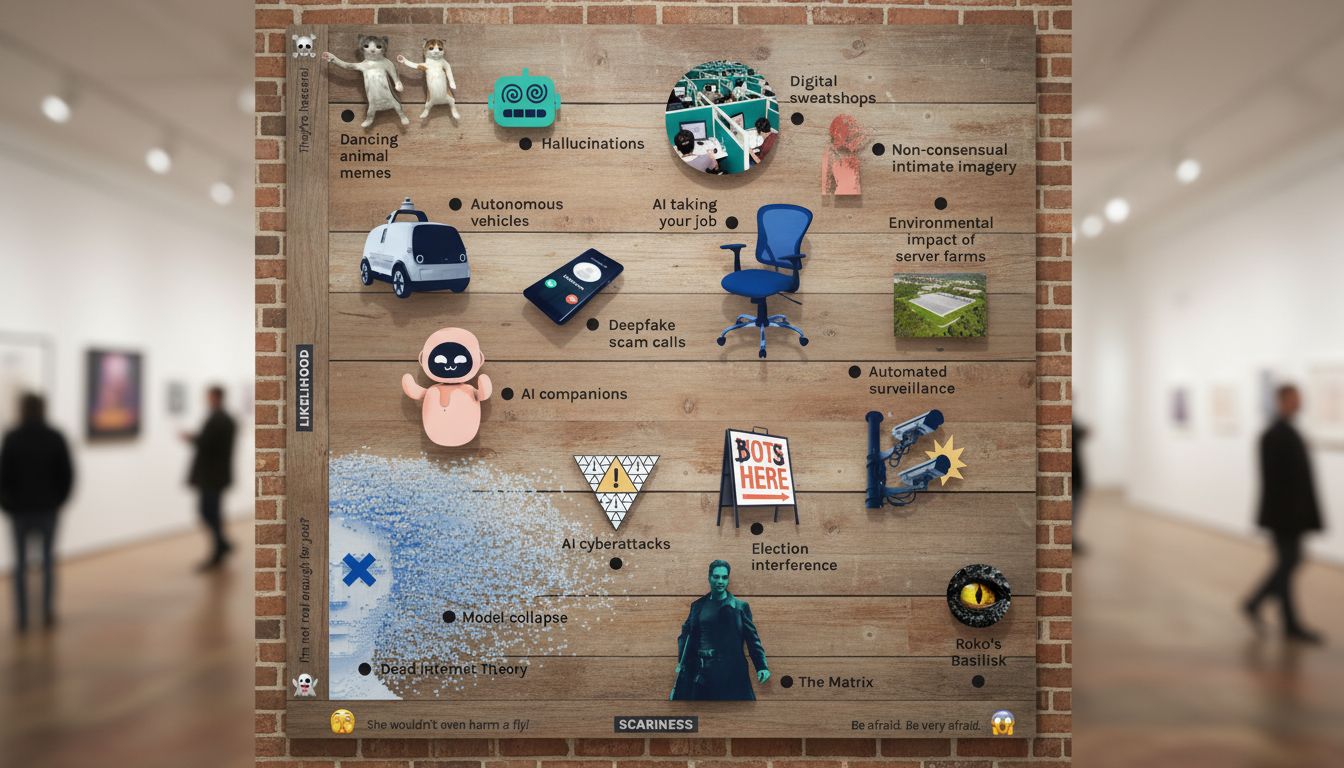

Any serious look at AI risk has to start with specifics. Generative models can produce misinformation at scale. Facial recognition systems have documented accuracy gaps across demographic groups. Autonomous vehicles still struggle with edge cases. These are concrete risks that deserve concrete responses.

The scary ai narrative sometimes conflates these real, tractable problems with science fiction scenarios. Both deserve attention, but they require different tools. Regulation, technical auditing, and public transparency address the immediate risks far more effectively than general alarm.

AI of Han: Cultural Context in Modern Artificial Intelligence

The ai of han framing reminds us that AI development does not happen in a cultural vacuum. China has invested heavily in AI infrastructure, and the Han majority culture shapes some of its deployment priorities. Facial recognition tools trained on Han faces performed differently on minority populations, a real documented problem with serious human rights implications.

This is a pattern seen globally, not only in China. AI systems trained predominantly on data from one demographic group show reduced accuracy for others. The ai of han discussion, at its most useful, prompts researchers and policymakers to ask: whose data trained this model, and who bears the cost when it fails?

Broadening training data and diversifying AI development teams are practical responses. They do not require accepting that AI is inherently scary. They require accepting that AI reflects the choices of the people who build it.

How to Think Clearly About Scary AI Today

Framing AI as universally scary leads to paralysis. Dismissing all AI concern as hype leads to negligence. The more useful position is informed skepticism: pay close attention to where AI is making consequential decisions, who is accountable for those decisions, and what recourse exists when things go wrong.

The emperor ai and ai chi frameworks offer complementary lenses. One highlights the danger of unchecked concentration. The other points toward productive human-machine collaboration. Together they describe a path that takes AI seriously without surrendering to panic.

Scary ai is real in specific contexts. It is also a field where thoughtful design, clear regulation, and ongoing public scrutiny can make the technology genuinely useful. The emperor ai of han comparison is most valuable when it motivates accountability rather than fear. And the ai of han lens works best when it leads to broader, more representative systems rather than blanket suspicion.