AI Periodic Table: Mapping the Tools That Define Our Future

The AI periodic table is a framework that organizes artificial intelligence tools, models, and capabilities the way chemistry organizes elements — by category, function, and relationship. A future day when AI permeates every sector of work and life is not a distant hypothetical; it is already arriving in patches. The debate about America future is increasingly a debate about AI readiness, infrastructure, and workforce adaptation. For anyone making long-term financial decisions, the future value factor table offers a model for how compounding growth — in money or technology — scales over time. And beneath the hype, intrinsic value psychology reminds us that what we build with AI should reflect what we actually value as people.

This article explores how the AI periodic table works, what it tells us about the direction of the field, and why understanding it matters for your work and decisions.

What the AI Periodic Table Organizes

Categories, Elements, and Relationships

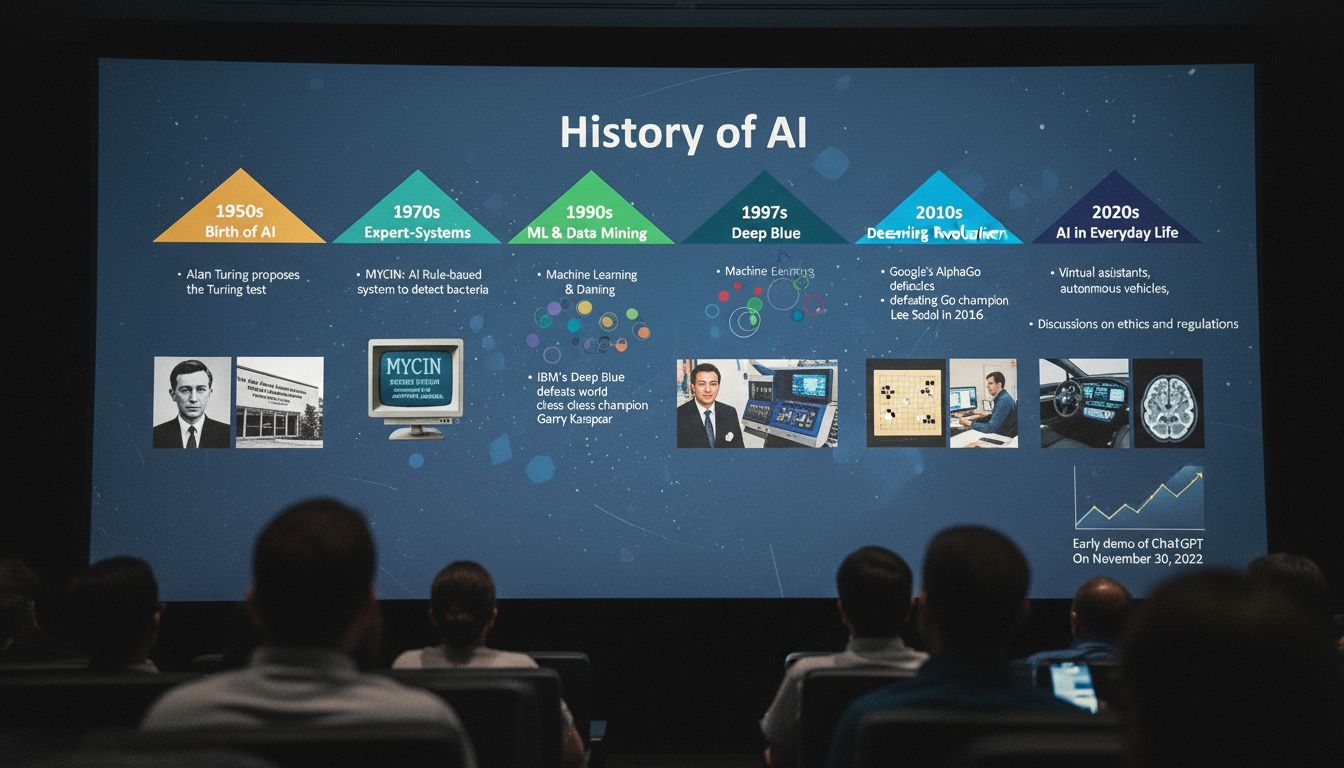

The AI periodic table groups AI technologies by their core function. Just as chemistry’s table groups elements by atomic structure and reactivity, an AI periodic table groups tools by what they do: generate, classify, predict, optimize, or reason. Common categories include large language models, computer vision systems, reinforcement learning agents, generative image tools, and speech recognition platforms.

Different organizations have published their own versions of the AI periodic table. Some focus on commercial tools, others on research categories. What they share is the idea that AI is not one thing — it is a structured ecosystem of specialized capabilities, each with its own strengths, limitations, and best-use contexts.

Understanding the AI landscape this way helps you make better decisions about which tools fit which problems. A language model is not the right choice for real-time sensor data analysis. A reinforcement learning agent is not what you want for document summarization. The periodic table framing makes these distinctions easier to navigate.

America Future and the AI Readiness Gap

The question of America future in an AI-driven world is partly a policy question and partly a talent question. The United States leads in AI research output and venture investment, but faces real challenges in translating that leadership into broad economic benefit. Automation is already displacing certain job categories faster than retraining programs can respond.

The America future in AI depends on decisions being made right now in education, immigration policy, and public infrastructure. Countries that invest in digital literacy at the K-12 level, maintain pathways for international AI talent, and build regulatory frameworks that allow innovation while managing risk are likely to hold competitive advantages over the next decade.

A future day when most knowledge work involves AI collaboration is already visible in early-adopter industries like law, medicine, and financial services. The firms and governments that prepare now will shape the rules others follow later.

Future Value Factor Table: A Model for Thinking About AI Growth

The future value factor table is a financial tool, but its logic applies directly to thinking about AI adoption. The table shows how a fixed input grows over time at a given rate of return. Applied to AI: small investments in capability — a well-trained team, a good data infrastructure, or early adoption of productivity tools — compound into significant competitive advantages over three to five years.

Companies that began using AI-assisted coding tools in 2021 are now significantly faster at software development than those that waited. The future value factor table logic explains why: early adoption means more iterations, more learning, and more compounded efficiency gains. Waiting reduces the growth period and the final outcome.

This framing is useful for individuals too. If you invest time now in understanding how AI tools work in your field, that knowledge compounds. You become a more effective user, then a more effective evaluator, then someone who shapes how your organization uses these tools.

Intrinsic Value Psychology and What AI Should Serve

Intrinsic value psychology is the study of what people value for its own sake — not as a means to something else, but as an end in itself. Autonomy, connection, creativity, and meaning consistently appear at the top of intrinsic value hierarchies across cultures. These are not things AI provides directly. They are things AI should support.

The risk in any AI deployment is optimizing for measurable outputs — speed, volume, cost — while eroding the intrinsic rewards that make work meaningful. A system that automates every routine task might free up time, but if it also removes the sense of craft or judgment that made the work satisfying, you have not gained as much as the efficiency numbers suggest.

Intrinsic value psychology offers a useful design constraint: before deploying an AI tool, ask what it does to the human experience of the work, not just the output. A future day where AI is everywhere is not automatically a good one. The quality of that future depends on whether the tools we build align with what people actually find valuable.

Bottom line: The AI periodic table gives you a map. The future value framework tells you why acting early matters. Intrinsic value psychology tells you what that future should actually be for.