AI 2.0: What the Next Generation of Artificial Intelligence Really Means

AI 2.0 is a phrase that circulates in technology journalism, investor pitches, and academic papers to describe the current wave of generative and multimodal artificial intelligence systems — a step beyond the narrow AI tools of the previous decade. The Ai Weiwei vase is a famous artwork where the Chinese dissident artist painted a Coca-Cola logo on a Han dynasty urn, turning heritage into provocation. Ai Weiwei straight is a sculptural installation made from rebar salvaged from schools destroyed in the 2008 Sichuan earthquake, challenging official narratives about construction quality. Li Ai is a name shared by several prominent figures in AI research and is commonly referenced in discussions about Chinese contributions to machine learning development. And Ai Kyan is a character appearing in anime and manga that has become a touchstone for AI-generated fan communities. These terms all carry the letters “AI” for different reasons — and tracing those differences reveals something useful about how the word itself has spread far beyond its technical origins.

This article unpacks what AI 2.0 actually refers to technically, how it connects to broader cultural conversations, and why names like Li Ai and references like Ai Kyan appear alongside serious technology discussions.

What AI 2.0 Means for Technology and Culture

From Art Provocation to Algorithmic Systems

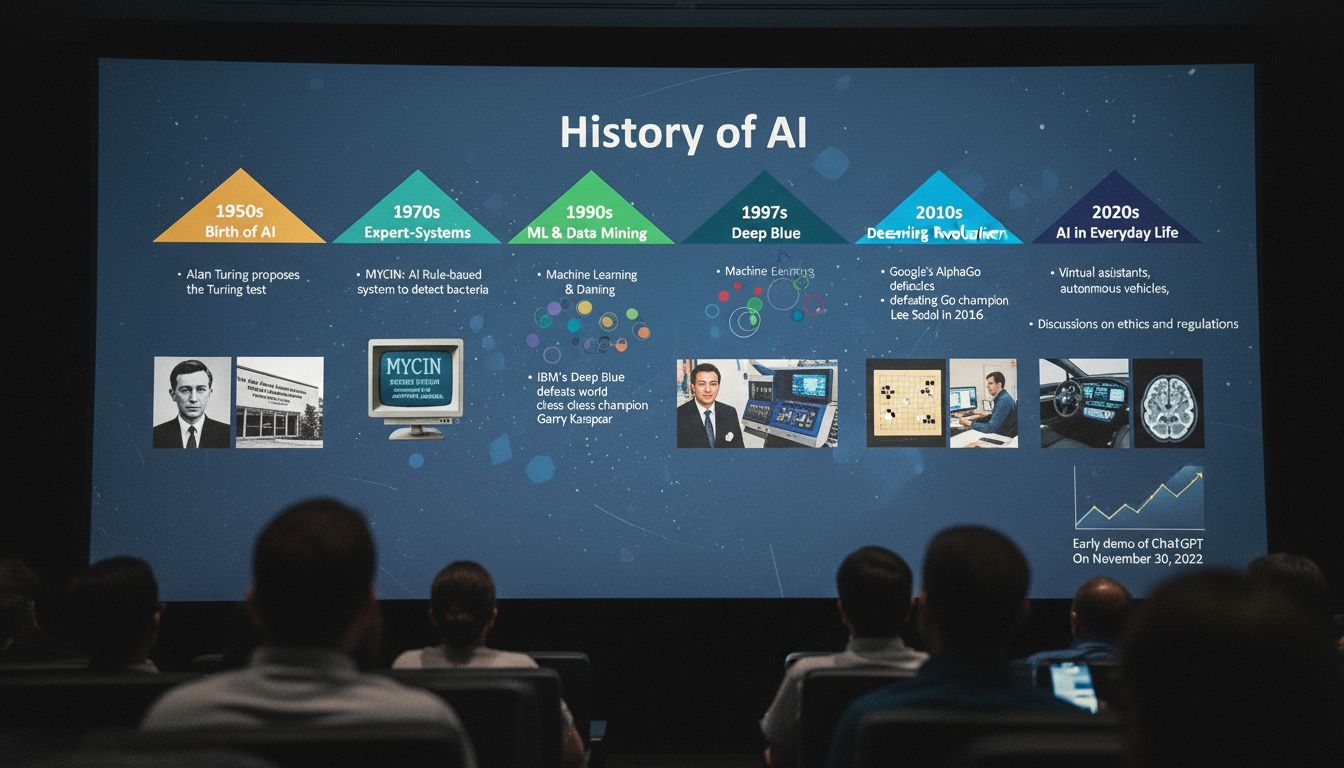

The technical meaning of AI 2.0 generally refers to large language models, diffusion models, and multimodal systems that can generate text, images, audio, and video from simple prompts. Earlier AI systems were narrow — a chess engine plays chess, a spam filter filters spam. Systems in the AI 2.0 category operate across domains, handling translation, summarization, coding, image creation, and reasoning in a single architecture.

GPT-4, Claude, Gemini, Stable Diffusion, and Sora are examples of AI 2.0 systems. What distinguishes them from earlier tools is their ability to generalize from training data rather than following explicit programmed rules. This makes them flexible but also unpredictable in ways that narrow systems are not.

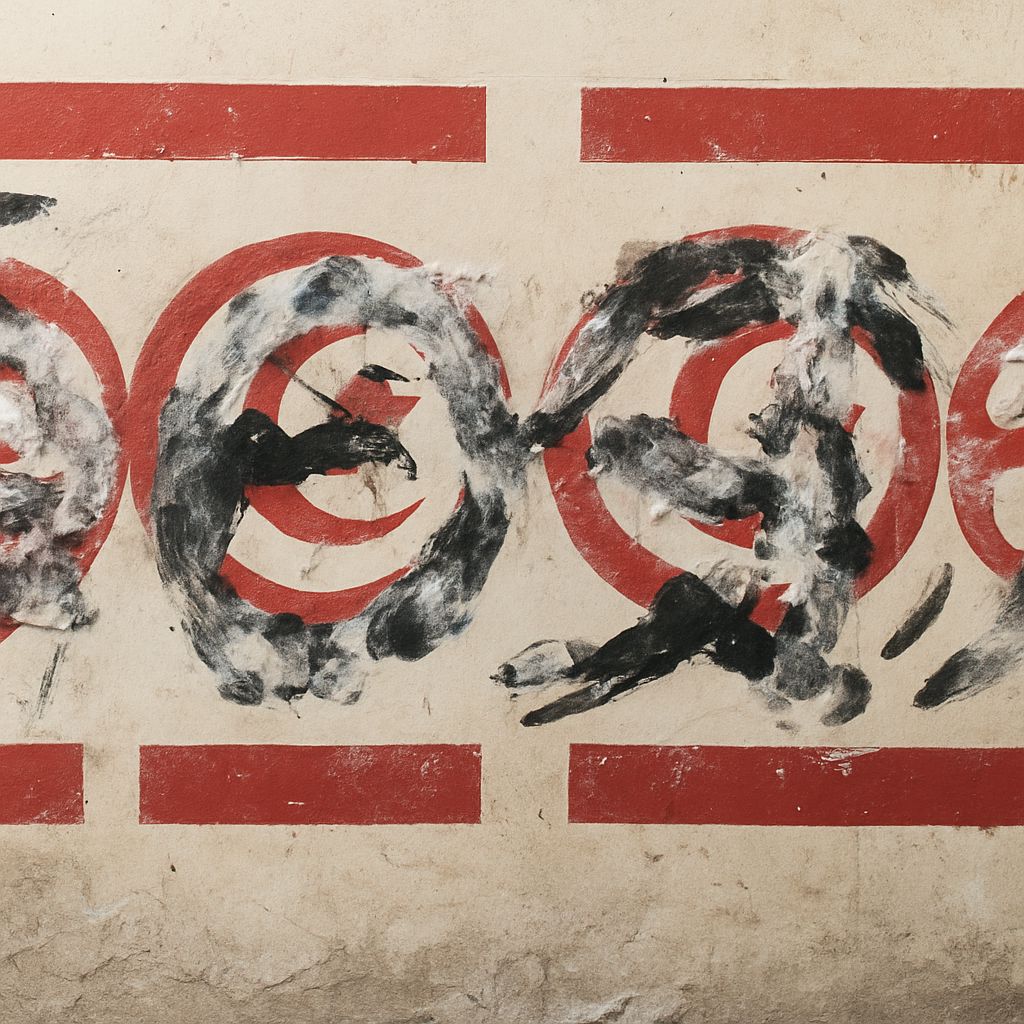

The Ai Weiwei vase sits in a very different world but connects to AI discussions because the artist’s work repeatedly questions what is authentic, who controls meaning, and what happens when cultural objects are recontextualized. These are the same questions generative AI raises about creativity, ownership, and originality. An AI 2.0 system can generate thousands of images in the style of a living artist without permission — a provocation parallel in some ways to painting a luxury logo on an ancient artifact.

Ai Weiwei straight — the installation made from earthquake rebar — raises questions about accountability and evidence. The artist painstakingly documented each piece of rebar, recording which school it came from and how many children died there. This kind of documentation work, painstakingly human, is now partly being replicated by AI systems trained to identify patterns across large datasets. The comparison is imperfect but worth noting: AI 2.0 systems are becoming documentation and analysis tools in disaster response, construction monitoring, and public records verification.

Li Ai, Ai Kyan, and AI in Creative Contexts

Li Ai appears in AI research contexts primarily as a researcher affiliated with institutions working on natural language processing, computer vision, and reinforcement learning. The name is common in Chinese-speaking communities, and multiple researchers with this name have contributed to published literature on machine learning. Tracking Li Ai research means specifying an institutional affiliation or paper title, since the name alone is not specific enough to identify one person.

The broader point is that AI 2.0 development is global. Researchers in China, South Korea, the European Union, Canada, and the United States all contribute to the field. Narrowing the conversation to any single country’s institutions misses the distributed nature of how knowledge in this area is built and shared.

Ai Kyan is a character from anime, and the name’s appearance in AI-related searches reflects how generative image tools have changed fan culture. Communities that previously drew fan art by hand now use text-to-image systems to create and share depictions of characters at scale. Ai Kyan content generated by these tools appears on platforms like Pixiv, Reddit, and DeviantArt in volumes no individual artist could match. This raises genuine questions about what fan creativity means when it is mediated by AI 2.0 systems.

AI 2.0 systems are not sentient, intentional, or creative in the way humans are. They generate plausible outputs by predicting likely continuations of input patterns. Understanding that mechanism matters for evaluating their outputs critically — whether the output is a business report, a legal summary, or an image of an anime character. The tool does not understand the content it produces. That responsibility remains with the person using it.

The cultural spread of the “AI” label — across research, art, anime characters, and political commentary — reflects how central artificial intelligence has become to how people think about the present moment. AI 2.0 as a technical concept lives inside that broader conversation, sometimes usefully and sometimes obscured by the noise.

Key takeaways: AI 2.0 refers to generative multimodal systems that differ meaningfully from earlier narrow AI tools. Cultural references like Ai Weiwei vase and Ai Kyan appear in the same search landscape for different reasons. Understanding what each term actually refers to makes it easier to follow the real developments in artificial intelligence without getting lost in the noise.